DCIM For Dummies

DCIM

Data Center Infrastructure Management (DCIM) is the discipline of managing the physical infrastructure of a data center and optimizing its ongoing operation. DCIM is a software suite that bridges the traditional gap between IT and the facilities groups and coordinates between the two. DCIM reduces computing costs while making it easier to quickly support new applications and other business requirements.

In This Chapter

- Examining the evolution of data centers

- Seeing what DCIM has to offer

- Looking at the importance and stakeholders of DCIM

- Overviewing DCIM goals

- Looking at DCIM today

- Aligning IT with business needs

Try saying Data Center Infrastructure Management ten times fast. Now you know why everybody calls it DCIM instead. It's a complex subject, and DCIM means different things to different people. Basically though, DCIM is a resource and capacity planning business management solution for the data center. DCIM as a separate field came into being in response to changes in the operating environment that required better management of operations that had been ad-hoc in the past.

This chapter explains how data centers have evolved and how they were haphazardly managed before comprehensive, integrated DCIM software became available. This chapter also discusses how the demand for ever-increasing performance from a data center has driven the evolution of increasingly powerful solutions to help manage all the infrastructure a data center needs, including hardware, software, and facilities resources.

How Data Centers Have Evolved

Ever since computers were first used for business tasks, the specific needs of each application in aggregate have dictated the size, capacity, and specific configuration of the computing environment. As businesses grow and the number of computing projects increases, their data center needs grow and evolve as well. Because challenges and opportunities differ from one business to another, the computing resources of each tend to evolve in different ways.

To meet these new application needs, the data center is constantly changing. Equipment is added or removed regularly. Older equipment is exchanged for newer, more powerful or more cost-effective machines. At times, data center change can be sweeping and disruptive. After a few years of ad-hoc changes combined with normal employee turnover, nobody knows exactly what equipment exists, why or where it is installed, who owns it, or even what it does.

Data centers that have grown haphazardly as requirements have shifted have often become massive structures that are difficult to support. All too often, band-aid fixes to problems are applied that fix problems for a short time, but leave untouched the underlying structural and ongoing management problems.

What Does DCIM Provide?

Many enterprise-level organizations have comprehensive, integrated software suites that oversee and manage key aspects of their business, such as sales, finance, manufacturing, and shipping. The larger the organization, the more critical it is to have such overarching visibility and control over core concerns. The same logic calls for comprehensive, integrated control over the enterprise's data center, which has typically become an organization's largest and most valuable asset.

Today, IT management is looking for its own purpose-built enterprise-class business management suite for the entire data center. Managers are looking for something that ties together everything from the physical component layer, the virtual and logical layers, and up through the actual delivering of information and control to IT personnel. The desire is to move from a tactical approach aimed at keeping bad things from happening to a strategic implementation of well-organized management technology. The aim is to capture years of data center asset-management expertise and present it as sets of business management processes and specific supporting tools. The result is effective ongoing management of the business at hand, which is definable, accountable, defendable, supportable, and consistent.

In simple terms, DCIM is the strategic business management solution for the data center. DCIM is the structured approach to managing change, purpose-built for the data center. That change consists of the facility itself, as well as all of the IT components housed within those facilities. Perhaps more than anything else, DCIM's value is in its capability to manage change workflows for the business of IT at the physical infrastructure level and in tying these physical structures to the applications that live on top of that physical infrastructure.

In the course of its evolution, DCIM has become a data center management extension to a number of other systems, including asset and service management, financial general ledgers, and other core business systems. A well-deployed DCIM solution quantifies the costs associated with moving, adding, or changing equipment on the data center floor. It understands the cost and complexity of operation of those assets, and clearly identifies the value that each asset provides over its lifespan. The views provided by DCIM serve to bring together the IT and the facilities world.

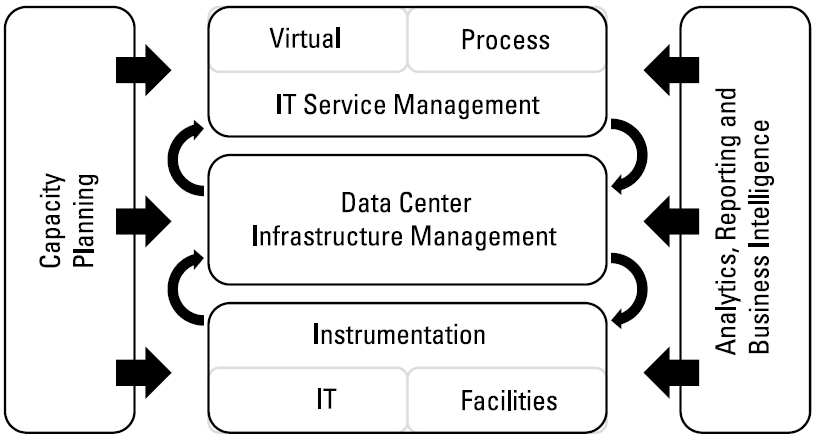

Figure 1-1 shows how DCIM stands between IT and facilities and joins them together. The physical assets of the facility, such as floor space, electrical power, environmental control, and cooling are monitored and controlled by DCIM processes, which then interface with the virtual infrastructure overseen by the IT function. The DCIM suite provides an overview of system health and functioning, and also enables drilling down to any desired level of detail for fine-grained control of operations.

As a category, DCIM can be pretty broadly defined. In addition to the comprehensive software suites described previously, anyone who sells any component of the system can claim to be a DCIM vendor, including those that provide network-addressable power strips or environmental sensors. A well-conceived DCIM implementation will generally include several such vendor solutions, all working together. For example, a core DCIM management suite might be coupled with intelligent power appliances as well as several different environment sensor solutions.

Figure 1-1: DCIM is the layer of infrastructure that supports the enterprise IT function.

Of all the advantages that DCIM provides, perhaps the most important is that it breaks down the walls of the silos that IT and facilities have been living in and enables those groups to work together more closely to satisfy the needs of the business. The overall goals are to reduce the cost of doing the computing work that the data center is charged with doing and increase the responsiveness to changing business needs.

Computing cost has many components. Some are IT-specific, some are facilities-specific, and some relate to the interface between the two. Prior to the implementation of a DCIM system, some of those interface opportunity costs can't be realized because they don't leverage the broader view. The DCIM platform exposes these costs and allows scrutiny, which is the first step toward reducing or eliminating them.

The most important IT aspects of DCIM

The IT aspects of DCIM are primarily concerned with resource and process management. Do you have enough space and power in the right place for new application rollout? Are the provisioning, remediation, and decommissioning workflows the correct ones? Are these processes repeatable and defendable? These are the main questions that IT managers are asking, and they're looking to DCIM for the answers. Today's DCIM suites should be able to give answers to these questions with an easy-to-comprehend visual representation of the state of the system at any point in time.

The most important facilities aspects of DCIM

To the facilities manager, visibility of the real-time status of energy usage and cooling effectiveness is important, as is the capability to manage capacity-related planning and what-if scenarios. Job one is to provide an environment that enables continuing, trouble-free operation. This must also be accompanied by a clear understanding of the impact of new projects on power and cooling resources.

How DCIM relates to business goals

IT and facilities management must constantly maintain a delicate balancing act to match the supply of computing with the ever-changing demand for it. A major business goal is to provide needed computing services at the lowest possible cost per unit of work. To do that, organizations must maintain sufficient capacity to satisfy peak demand, but at the same time they must have the knowledge that will enable them to plan for future computing needs over time. In the future, DCIM systems will be a critical set of metrics to be used by virtualization systems to perform the matching operation between computing supply and demand automatically, without human intervention.

The Strategic Importance of DCIM

DCIM enables the data center to make the best use of the physical resources available, as well as enabling the seamless integration of the data center into the enterprise's other business management solutions for asset management, process management, data management, capacity planning, budgetary planning, and other important systems.

Information is the most strategic asset and competitive differentiator that an organization has. The data center floor is the producer of that strategic value. DCIM is the manager of that data center floor, consisting potentially of hundreds of millions of dollars in assets, as well as billions of dollars in information that flows through that data center.

The DCIM Stakeholders

Four functional categories have a direct interest in DCIM. The first, of course, is the IT organization, which consists of individuals who spend their entire careers keeping the data center operating. A close second is the facilities organization, which must supply power and cooling as well as maintain building infrastructure, in support of data center operations. Thirdly, the finance department is keenly focused on the costs of maintaining the data center infrastructure, and how those costs compare with the business value delivered by the data center. Finally, the executive team must understand the positive impact DCIM can have on their corporate management tasks, especially in regard to today's increased focus on IT costs.

How DCIM Influences, Enables, and Supports Corporate Goals for IT

Information technology has been in a state of continual transition since its beginnings. These changes, in a multitude of areas, can be smoother and less painful with DCIM. Here are some of the benefits of DCIM:

- The ascendancy of the cloud has had a major disruptive effect on the traditional way of scaling business processing. DCIM can be used with both public and private cloud infrastructures. It makes it easier to create IT infrastructures that are more elastic and responsive, while allowing high degrees of visibility and control of space, power, and networking resources. It also adds documentation of what it sees and controls.

- By actively managing assets, which in turn affects total power usage, DCIM can have a significant impact on an organization's cost structure and carbon footprint. DCIM allows energy usage to be modelled upfront instead of tracked after the fact.

- To optimally deploy assets within a data center, detailed capacity planning is essential. DCIM provides the detail identifying where each asset should be deployed and how frequently equipment should be refreshed. DCIM gives managers visibility of long-term usage trends.

- In the past, IT organizations have acted reactively to problems as they arose. But DCIM, by making fine-grained information available instantly, can enhance and coordinate changes across major systems. Users of DCIM systems can dramatically increase operational efficiency, increase productivity, reduce human errors, and even reduce the time it takes to solve problems that do arise. As a result, rather than requiring a large number of low-skilled workers who perform repetitive jobs, organizations with DCIM in place can utilize those workers in higher-skilled roles.

- The facilities that house a data center are costly, and DCIM extends the useful life of those data centers by identifying stranded resources, and by optimizing the use of existing capacity. Any time you can avoid buying new capacity by making better use of the capacity you already have is a win for your organization.

- Regulations and legislation are getting more restrictive all the time, often in an attempt to reduce energy consumption and the carbon footprint of industry. With DCIM, you can identify energy consumption, enabling you to take action to reduce it per unit of work that is accomplished. In the process, DCIM also provides the documentation that regulatory agencies require.

Common Goals for DCIM

People with different functions come to DCIM with different goals, based on different needs that they have. Fortunately, DCIM actually can help with widely disparate requirements, including the following:

- One overriding goal is to reduce the operating cost of the data center. It is a truism that you can't manage what you don't measure. The instantaneous view that DCIM gives you of not only power consumption, but also every other critical resource gives you the basis for performing actions that will lower costs and continue to control them on an ongoing basis. Not only will you know what your power consumption is, you will know exactly which components of your system are consuming it, and at what rate.

- In addition to optimizing the use of electric power, a major goal of DCIM is to provide timely and accurate information about the available capacity of the data center for future growth.

- If you want a data center to operate according to best practices, it's important to be able to identify, optimize, and manage the workflows associated with change for the data center's physical assets. DCIM captures the processes associated with change, and then enforces the steps required to make those changes across the infrastructure.

- DCIM coordinates and consolidates disparate sources of real-time information into a single view of asset knowledge.

- DCIM gives predictability of space, power, and cooling capacity, increasing data center lifespans as well as lowering operating costs.

- DCIM translates raw data about the data center into actionable business intelligence suitable for all.

Why DCIM Is Important Today

Modern DCIM suites touch every aspect of the operation of a data center. They provide value in a number of different ways. Some of these ways have to do with controlling costs, some facilitate smoother and more supportable operations, and others make it easier to operate in compliance with government regulations.

Triggers to the acquisition and deployment of DCIM

DCIM is a relatively new discipline, but of course organizations have been concerned with asset management since the first data centers started operating. Early approaches to data center asset management were merely extensions of financial bookkeeping tools. Accounting systems were augmented by adding information about physical attributes of assets and who owned what. Some efforts went beyond that to include crude visualizations of rack and floor layouts. These early efforts added some value to the enterprise, but were not considered strategic, so they didn't improve much with time. They were considered tactical tools and not critical.

Everything changed when the financial crisis of 2007-2008 turned into The Great Recession. The price of energy skyrocketed, while the income streams for many organizations plummeted. Reduced customer spending caused the data center industry to look for ways to become more efficient. Luckily, some of the DCIM solutions at that time had risen to a level of maturity to answer those needs.

Managing capacity

The three physical resources that have the biggest impact on data center cost are power, cooling, and floor space. The cost of power has been rising steadily, and as power consumption increases, the need for more cooling capacity increases right along with it. In addition to requiring more power and cooling capacity, floor space in the data center must be treated as a precious commodity because it is finite in nature.

The cost to add space to the infrastructure is enormous. High quality space is actually hard to come by. Power is available in most locations, but at a cost that continues to rise. And remember that for every watt of power that is used by IT equipment, another watt of power is used to cool that equipment. The analytics functions of DCIM installations show where and how these physical resources are used and predict future needs based on existing trends.

DCIM can also point out equipment that's drawing power but contributing little or nothing to the productivity of the data center. Sometimes, equipment continues to run just because it always has. DCIM can identify equipment that is no longer needed and, using its workflows, can enable the decommissioning of that gear, thereby saving power and space. Up to onethird of all devices on a data center floor may be unnecessary.

DCIM can also pinpoint older equipment that must be refreshed to realize the modern economics of more efficient models.

Re-engineering business processes

Traditionally, data centers have operated on the principle of "high service levels at any cost." Today, with tighter budgets, this mantra has been transformed into a planned and predictable approach where the question is asked, "What is the value and cost of each service?" Individual service value and cost have now been added as considerations. IT organizations are being asked to document their existing approaches and then evaluate whether those practices still make sense. Many organizations find that their existing practices impede their ability to streamline their operations.

DCIM enables organizations to create baselines from which they can author new optimized workflows and manage their assets over long periods of time. With DCIM, organizations can capture current business practices and then modify them to optimize performance and improve efficiency.

Consolidating resources

Data center consolidation has been a major trend for the past several years, and it promises to continue on into the future. Advances in computing technology have enabled the concentration of more computing power into an ever-smaller space. And corporate mergers have resulted in mergers of those corporations' data centers.

DCIM directly supports the volume of commissioning and

decommissioning of computing equipment found in data centers that are undergoing consolidation. Entire structures can be exported easily from one location and redeployed in another. DCIM becomes an essential enabler of data center migration and consolidation projects.

Adding new capacity or even new data centers

Technology is advancing at such a rapid pace that many organizations suddenly find that their data centers are several generations behind, and aren't up to the demands currently being made on them. These older data centers were designed with growth in mind, but not to the extent that has been required over the past several years. The near future promises to put an even greater strain on systems. The massive amount of data being collected and processed now will only get larger.

DCIM enables the quantification of current data center capacity, with an eye toward managing the demand for increasing capacity going forward. The tools available in modern DCIM suites can study a sample of time-based usage data, in combination with demands for new corporate initiatives, to provide the information needed for highly accurate data center planning. DCIM not only quantifies data center capacity, it also allows future capacity to be projected.

Minimizing costs

A data center's operating costs are dominated by the carrying cost of the IT equipment and its power and cooling. Curiously, the carrying costs of IT gear have been a little understood concept in IT organizations. These costs include acquisition, depreciation, warranty, and service. Combined, these costs are a significant factor in operating costs. Technology refreshment is the most common approach to minimizing this component.

Additionally, with green IT high on the priority list of every Chief Sustainability Officer, one goal is to create a data center that produces the greatest amount of computation per watt of electricity consumed.

In order to make the optimizations that will reduce power consumption and save money, a wide array of measurements must be made and evaluated to get a baseline of the resources currently in use by the data center. DCIM can then identify areas that can be optimized. As computing load and other conditions change, DCIM continues to suggest adjustments that can provide continuous improvement.

Performing a technology refresh

IT has been steadily growing for much of the last 50 years; the past 20 at an increasingly accelerated rate. This rapid growth means that at fairly regular intervals, major changes of the equipment in a data center must be made. These technology refreshes may call for an entire redesign of a data center's infrastructure. With a new design, old ways of doing things may no longer be effective, let alone optimal. Best practice manuals must be rewritten to accommodate new styles of computing. Among other things, DCIM can be used to document the new structure, giving you a solid baseline as well as enforcing each of these workflow steps required to make ongoing changes.

Designing for sustainability

Sustainability has to do with preserving the Earth's nonrenewable resources to the greatest extent possible. These nonrenewable resources include the fossil fuels that are used in the production of most of today's electricity. There is an urgency to trim electricity usage in the data center due to its sheer magnitude and associated costs. The measure of consumption is generally referred to as a data center's carbon footprint. Organizations strive to reduce their carbon footprints. Similarly, reductions in electricity usage also conserve water, because water is the primary medium used to carry away waste heat from the data center's cooling system.

The environment benefits when a data center runs more efficiently. Less electricity is used, saving money, less water is used for cooling, and finally, fewer pollutants are spewed into the atmosphere in the process of generating electricity.

DCIM provides the information that enables data center managers to identify equipment that uses more resources than are justified, enabling replacement by more efficient equipment.

The oversight challenge

The impact of the data center has become so pervasive throughout an enterprise that it is closely scrutinized not only by senior management, but also by watchdog and government regulatory agencies. Senior management wants to know that the data center is being run efficiently and government agencies are concerned with the environmental impact of the data center. DCIM enables the data center to be seen as a single system, with each of the components identified and understood. The efficiency of each component is made visible and can be optimized on a continuing basis as load factors and environmental variables change.

Due to product life cycles, financial models, optimizations, and evolution, a conservative estimate says that more than one quarter of all data center assets should be changed every year. For a data center with 250 racks of equipment, that equates to more than a dozen changes every day of the year. Each of these changes can be viewed as a project. In such an environment, manual processes or documentation are overwhelmed. DCIM is really the only tool that can manage such massive change.

Relating to the cloud

There are two types of clouds: public clouds and private clouds. Public clouds are businesses in themselves, run by major companies such as Google, Microsoft, or Amazon. Thousands, or even millions, of people or organizations can store their information on these clouds. Public clouds offer self-service, quick provisioning, and accounting, along with world class security and guaranteed uptime. To operate on this scale, public clouds absolutely must use some form of DCIM to responsively manage assets and dynamically tune their systems to variations in supply and demand. Without DCIM, public clouds can't deliver the level of service that users have come to expect.

Private clouds are basically traditional data centers that have been transformed using the principles pioneered by the public clouds. DCIM solutions are proving to be significant enabling technologies for this IT infrastructure reengineering. DCIM is all about enabling the data center to be responsive and aligned to the business.

The Business Mantra

If you want a data center to be operated like a business in its own right, it needs to have the attributes of a business. The following sections discuss the key business attributes that apply to data centers.

Repeatability of processes

In order for any business to run efficiently, standard processes for the way things are to be done must be established, and once established, they must be followed consistently and with repeatability. As processes become routine, productivity goes up and errors go down. This principle applies to data centers just as well as it does to entire businesses.

Predictability of results and timing

If your processes are always performed the same way on a consistent basis (repeatability), they should always produce predictable results, and they should do it in about the same amount of time, every time (predictability). DCIM is the tool that takes advantage of the repeatability of your processes to enable you to predict the performance of your data center, in the presence of variations in processing load and other variables.

Supportability using documentation

In order for a business to be viable over the long term, it must have some institutional memory. Because staff turnover is a reality in any business, that institutional memory needs to be codified in formal documentation. For something as complex as a data center, accurate and highly detailed documentation is critical. Not only is it important to document the physical plant and all the equipment in it, you must also document the change management processes that are performed on the equipment. Maintaining this type of detail is exactly what DCIM is designed to do.

Accountability: Instant access to any aspect of the structure, performance, and value

A data center manager should be prepared at any moment to justify the value that the organization is receiving from the data center. To be able to do that, she must have instant access to the structure of the data center and how it's performing at the moment. To have immediate access to an overview of operations, with the ability to drill down to any desired level of detail, the integrated view provided by DCIM is the only way to provide a responsive answer.

Documented: Diagrams, impact analysis, system of record

The documents used to provide the repeatability, predictability, supportability, and accountability mentioned in the preceding sections must be comprehensive, including detailed diagrams of all connections between components. The documentation should include a complete description of the system of record, from the most detailed level all the way up to an overview of the system. Another important part of the documentation is an impact analysis that predicts the way the system would be affected should a failure occur to one of its critical components, such as core router or storage switch. DCIM documentation can tell you what is affected upstream or downstream to help quantify production impacts.

Aligned: Goals of the data center are closely aligned with those of the enterprise

In large organizations, there is often a tendency for IT to operate as if it is an enterprise in its own right (an enterprise within an enterprise). This can lead to problems if the goals of IT leadership diverge from those of the enterprise in general. By tightening the integration between IT, finance, and facilities, as well as other functional groups in the organization, DCIM helps IT to see itself as an integral part of a larger entity rather than as an organization that is isolated by its raised floor from the parts of the organization that live on the ground floor.

The Key Components of DCIM

In This Chapter

- Planning floor space and rack space

- Looking at the equipment catalog

- Following asset life cycle requirements

- Planning for capacity and integrating with existing structures

- Dealing with data

Today's most robust Data Center Infrastructure Management (DCIM) solutions include all the necessary functions to support the required equipment provisioning, optimization, remediation, and documentation of your data center. Mature DCIM suites contain a range of functional modules that work together seamlessly. These modules offer many ways to gather large amounts of static and dynamic data, store it, correlate it, and then present the resulting information in a comprehensible way.

This chapter discusses the building blocks of a comprehensive DCIM solution.

Floor Space Planning

Each data center is shaped in its own way and has a fixed amount of floor space into which everything must fit. Most of the equipment included will reside in standard-sized racks, but some things will have nonstandard shapes or sizes.

Data centers are laid out on an X-Y coordinate grid that aligns with the placement of the 24-inch floor tiles in a traditional raised-floor data center. Even though data centers today are rarely simple rectangles, may not use raised flooring systems, and contain obstacles such as support columns and cooling equipment, the X-Y system is nonetheless universally used. The DCIM solution's floor planning component must account for differences in geometry and record an accurate position for each rack or other device.

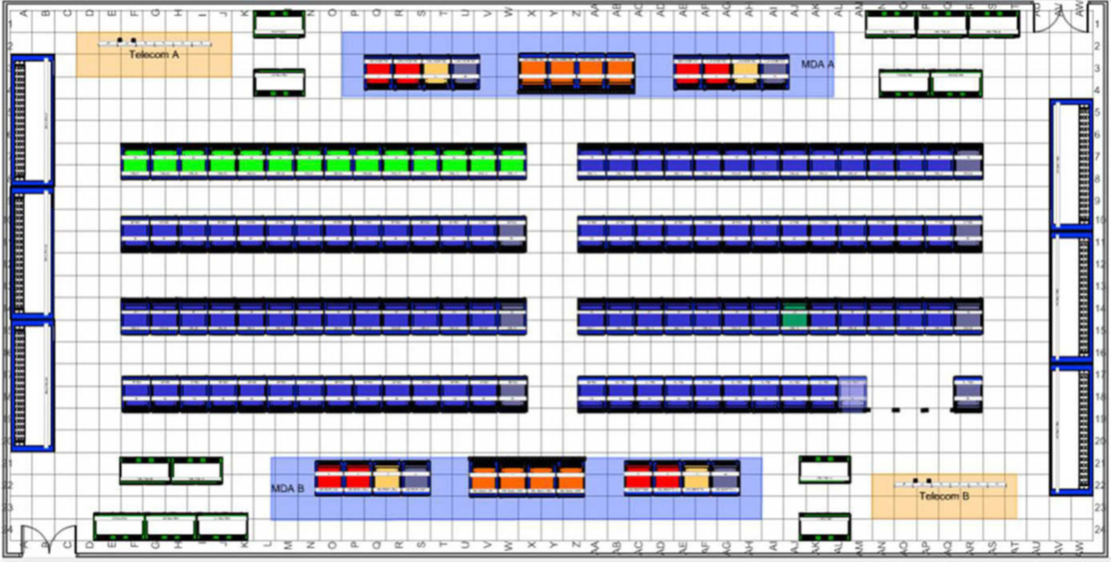

Most DCIM offerings also include an overall visual representation of the data center. Figure 2-1 shows an overhead view of a data center. Such overhead views can use color coding to represent data for categories such as ownership, function, capacity, or temperature.

Figure 2-1: A data center floor plan.

Rack Planning and Design

Data center racks are the basic building blocks from which data centers are built. Each rack is typically a steel cabinet that is 6 feet high, 2 feet wide, and 3 feet deep, although these height and depth dimensions may vary from one rack type to another. Approximately forty to fifty 1U devices fit into a rack. (Each U represents a vertical height of 1.75 inches). Depending on the sizes of the devices in a given rack, that number of devices may be greater or smaller. Equipment can be installed into a rack either from the front or the back, and may be either mounted on shelves or bolted into the rack frame. DCIM offerings enable racks to be placed on the floor tile map. As a result, the DCIM contains a high fidelity representation of the location and placement of every device.

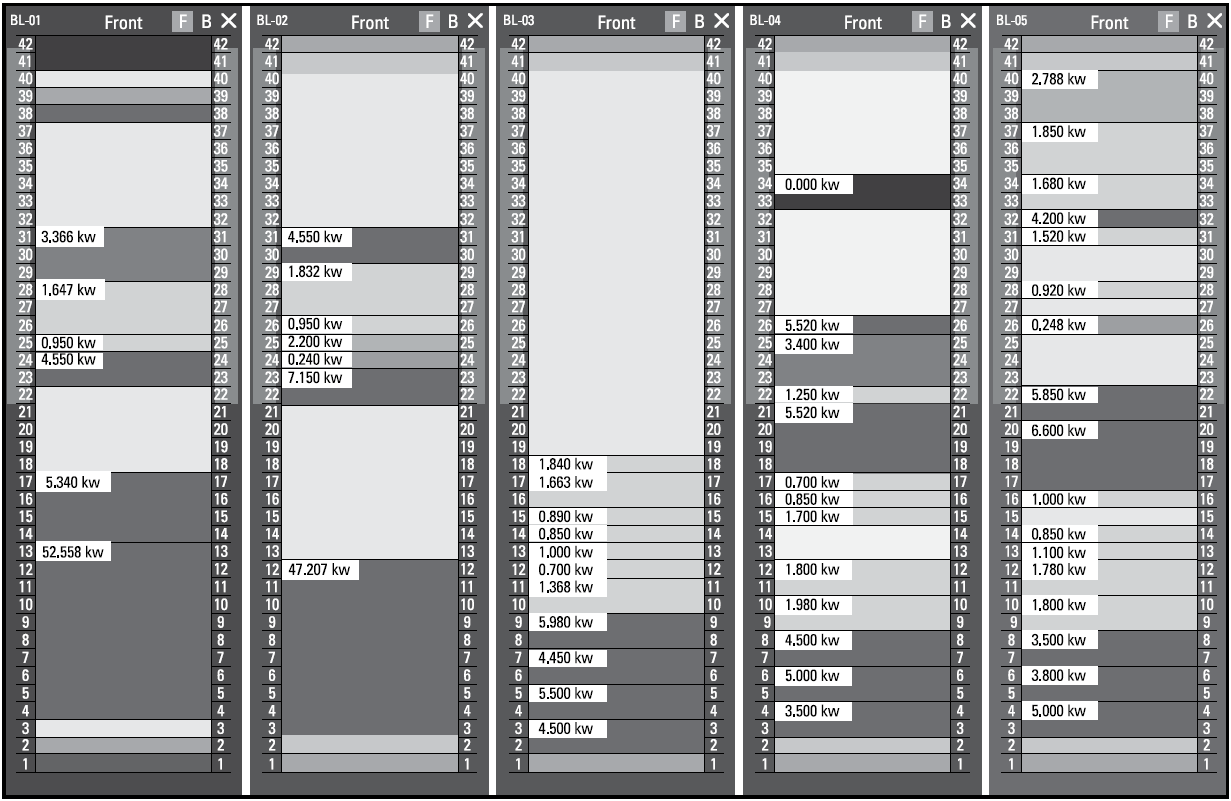

Knowing which equipment is in each rack is an important part of asset management. An understanding of what specific equipment each rack contains enables data center personnel to quickly determine not only the context of how devices are deployed, but also keep a running track of space, power, and cooling resources available for each rack. Prospective DCIM customers should pay close attention to how their DCIM vendor plans to maintain the accuracy of this data. In most cases, DCIM vendors start with the entries in their equipment materials catalog as building blocks for rack design, as shown in Figure 2-2.

Figure 2-2: Rack planning tools allow a highly accurate representation of all installed assets.

When planning new deployments, the DCIM suite can optimize the placement choice for each piece of equipment based on required resources. This design-time optimization is critically important to optimize the data center and prevent stranded resources. This type of interactive design is appropriate once a DCIM solution has been in operation for some time. When starting out, however, rack design can be handled by the DCIM solution's bulk import capabilities to load large numbers of devices into their racks along with the associated connectivity, typically derived from older spreadsheets and other electronic files.

The Equipment Materials Catalog

Over time, new business initiatives will require additional devices to be placed in racks on the data center floor. To facilitate this, mature DCIM solutions contain a catalog of equipment asset types. New devices that are added must be selected from that catalog or manually created and then used to populate the model of the data center ongoing.

Most vendors of DCIM solutions supply material libraries in excess of 5,000 devices. The materials catalog contains representations of devices and includes their manufacturers' specifications for them. Usually included are high resolution renderings of the front and back of the device, power requirements, physical dimensions, weight, connectivity, and other relevant parameters. Complex devices may also include information about installable options such as power supplies and interface cards.

But don't be fooled by the size of this catalog because new models are introduced every day, which means the library will quickly become outdated. More important is the ease with which new entries can be added to your version of the catalog.

Some vendors will add new entries at a customer's request. When shopping for a DCIM vendor, consider what is required to add any new devices to your version of the catalog yourself or what commitment you have from the DCIM vendor to do so.

Change Management

Every asset in a data center has a life cycle — as does the data center itself, starting with its installation, through its productive use, and finally reaching the time when it is replaced by something that more accurately satisfies the changed needs of the organization. The increasing rate of change demands the incorporation of DCIM into any data center desiring efficiency. The fiscal benefits of deploying DCIM to manage changes can far exceed the savings derived from power savings alone.

At its core, DCIM is a structured approach to asset life cycle management. The life cycles of the assets in a data center should be managed over their entire lifespan in the data center. Over the course of these years, thousands of changes will be made to the data center. Your IT business analysts will recommend equipment be refreshed at a period to match its depreciation and warranty schedules; typically every three to four years. If a quarter to a third of your servers are being changed out every year, imagine what must be done on a daily basis. DCIM is the business management platform that keeps all these Add/Move/Change cycles in order, documents the process at each step, and identifies tasks that need to be completed. Following a consistent set of steps provided by DCIM workflows reduces human error, saving time and money.

Capacity Planning

One of the most sought-after capabilities of a modern DCIM suite is its capability to handle capacity of production resources. Data centers have physical capabilities and limitations. Whether considering floor space, weight, power, connectivity, or cooling, each data center has a set of physical limitations that determines its maximum capacity for work. DCIM gives you clear visibility into these limiting factors, tracking them over time. Tracking all resources over time enables predictions to be made about when any particular resource will be exhausted, and thus when supplementary or even replacement capacity must be brought online.

Visibility of past and present data enables the recognition of trends that can be extrapolated into the future. Mature DCIM solutions use such data to make forecasts of future needs and the capacity that will be needed to satisfy those needs. Recently, an IDC survey found that almost one third of all data centers have been forced to delay the introduction of new business services, and more than three quarters of those same data centers have had to spend extra OPEX budget to maintain a poorly designed or out-of-date data center structure. The costs of such delays can be huge.

A mature DCIM suite is based on a model that includes a fine-grained representation of the data center, enabling it to identify where resources such as floor space, power, cooling capacity, and connectivity exist. Over several years, many data centers have lost the use of available resources when the data center's original design is modified and resources are moved, often to places where they can't be effectively used.

Another problem is locating a resource in a place where a normally co-resident resource isn't available. For example, a high density blade server may be located in an area with plenty of power, but limited cooling capacity. That power essentially becomes "stranded." It can't be used without sufficient cooling. The same kinds of imbalances can occur across all of the data center's resources. Modern DCIM solutions help by identifying where resources exist and suggest the optimal placement of new devices based on the availability of all of the required resources at the same place. Such optimization can add two or more years of useful life to an existing data center structure.

Integrating with Existing Management Frameworks

Before the advent of DCIM systems, data centers were managed only at the logical level by point management solutions. These solutions ignored the physical structure altogether. The most useful DCIM solutions are those that connect to those preexisting solutions as well as traditional business management applications that have been used to coordinate workflows and track assets. Integration to these existing systems is critical to extending the value of service desk and ticketing processes. DCIM vendors are increasingly finding their customers asking for integrations with these systems. Integrations can range from simple device access via standard protocols such as SNMP, Modbus, or WMI, to more complex web-based integrations of workflow and CMDBs.

Prospective customers should consider a vendor's availability of off the shelf conduits rather than professional services engagements when making DCIM vendor choices.

It is commonplace today to dynamically match capacity to load at the logical and virtual machine levels, but not at the physical level. However, the physical level is the place where such dynamic management would do the most good, and that is exactly where DCIM fits.

Real-Time Data Collection

When many people hear about DCIM, they think, "Oh, that's only about real-time data collection." Fortunately, that couldn't be farther from the truth. Real-time data collection is just one of the sources of information that provides asset metrics in real time. In fact, real-time data collection represents just a tiny portion of the DCIM opportunity.

Two major types of operational data must be collected in a data center. The first type is data about the traditional IT devices, their power usage, and their virtual components. These devices commonly communicate via traditional networking protocols such as SNMP or modern web-based APIs.

The second type of data comes from the data center's thermal, mechanical, and electrical infrastructure. This includes the thermal sensors, bulk power distribution, and cooling devices. These devices communicate with any one of a number of different protocols, including SNMP, MODbus, BACnet, LON, and in some cases even ancient ASCII RS-232.

In most cases, the data collected by a data center's various sensors and monitoring devices is collected a few times per hour by polling those devices. The vast majority of real-time data collected relates to such things as temperature, humidity, air pressure, and electric power. Records of these variables can be studied over long periods of time by the analytic portions of the DCIM software, in search of any trends that might be detected in the data.

A variety of collection utilities have appeared from a wide range of vendors. In many cases, these monitoring solutions can be combined with DCIM suites to create an increasingly dynamic DCIM solution, with each individual data collection solution contributing its specific piece of the puzzle. A modern data center will have several data collection systems in place, each of which can be leveraged into a mature DCIM solution. In general, the more sources of metrics that feed into the DCIM suite, the better.

With all the data coming in from a multitude of sources, the DCIM solution becomes the aggregation point and correlation engine that interprets the raw data.

Reporting

One of the most highly visible features of any DCIM solution is its reporting capability. It is in reports that massive amounts of raw data are transformed into information in a form that can be comprehended and acted upon. DCIM vendors take two approaches to reporting. The first approach is for the DCIM suite itself to offer a detailed raw reporting or ad-hoc reporting capability. An interface is provided to the user, which gives full access to the data.

The DCIM user's challenge is to decide upon the specific reports needed to run their business. The tool to create these reports is less important than the specific data center reports that the vendor includes in its offering out of the box.

The second approach is for the DCIM vendor to include a canned set of data center business management reports that are known to be of value to data center professionals. These canned reports are typically supplied in a library of reports, arranged in logical categories for ease of access. Predefined reports are extremely valuable to the end user not only in saving the effort that would have to go into writing custom reports, but also in exposing aspects of the system that the end user may not even know exist. Furthermore, there is significant value in having predefined reporting templates that can be used as a starting point for a customized report.

Dashboards

Dashboards, like reports, are a way of understanding vast amounts of information — but in a graphical format. They can be considered the at-a-glance version of a report and in some cases can update dynamically.

A diverse array of dashboards is needed to manage a data center, each with its own intended audience. Some dashboards can be very simple, showing raw data points or roll ups. For example, a readout might answer the question, "What is the total cost of data center power right now?" Alternatively, dashboards can be much more complex, interpreting large quantities and diverse types of data, and presenting the result in easy-to-read indicators. An everyday example of such an indicator would be the "check engine"

light in an automobile. It presents the simplest possible indication, but the decision to illuminate the light bulb is based on readings from hundreds of sensors, measuring a wide variety of things.

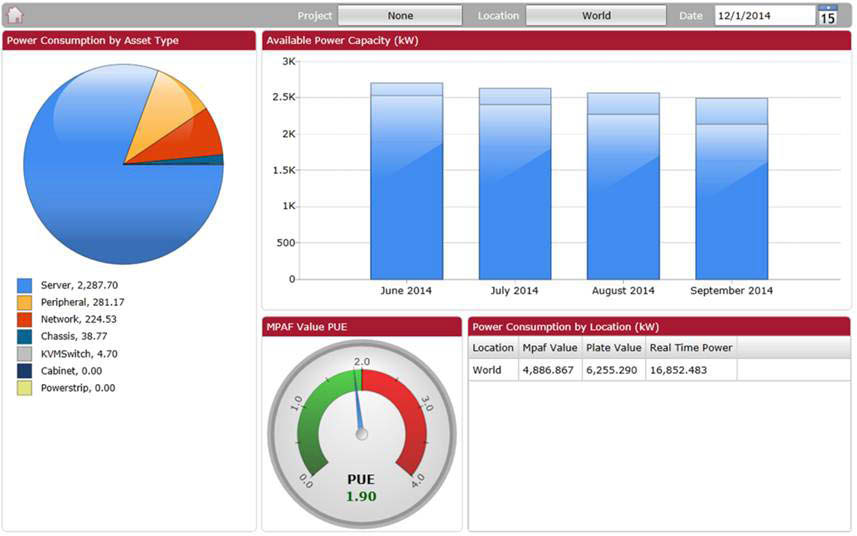

A complex DCIM dashboard may show the growth in power or space consumption over the previous 12 months, and go on to project how soon the current data center will run out of capacity. Thousands of points of time-series data may need to be considered when creating such a representation. Graphically it may be represented as shown in Figure 2-3.

Figure 2-3: Dashboards enable large amounts of data to be visualized easily and in real time.

The library of dashboards included by the DCIM suite vendor is an important metric of the chosen suite. Like reports, the vendor's own domain expertise and experience in managing data centers should be captured and presented as a canned set of dashboards ready to be used. A smoothly operating data center has a large and diverse array of stakeholders, all with specific metrics for which they're accountable. They each require a dashboard that speaks specifically to them. Finance looks at costs of equipment, depreciation, warranty coverage, and so on. Facilities professionals look for trends in power and cooling consumption. IT may be looking at space or virtualization sprawl. Each organization looks for answers to its own needs. DCIM dashboards deliver the required information in a form easily understood by those who need it.

Data Import and Export

When you first install a DCIM solution on an existing data center, it must be loaded with all of the information that had previously been collected by the ad-hoc tools (such as Excel). Importing electronic files is preferable to manually entering such data, as long as the existing files have a high degree of accuracy. In most cases, a mix of import and manual entry is used to start a DCIM project. Different DCIM vendors offer varying degrees of intelligence in the import process (to eliminate conflicts, omissions, or redundancies).

The basic import process provided by some vendors includes predefined templates and spreadsheet examples that the customer must then populate. All data from any existing source must be manually massaged and then copied and pasted into the provided spreadsheets to form the basis of the new DCIM system. The most mature DCIM vendors offer significantly more sophisticated solutions to the data import problem, using advanced error-checking. These will resolve many of the problems encountered in data importing by mapping the source information to the DCIM package's own data formats. The error corrections available during the importing process may include missing information lookup, sequential missing data replacement, asset field deduplication, proper handling of structured cabling range conventions, and a general ranking of data fields based on overlapping sources.

Data import is an absolutely critical component of any DCIM solution, because it is used at initial startup as well as ongoing as more sophistication is desired. Critical new information can be added regularly as that information becomes available.

In addition to data import, DCIM solutions also have a data export capability, allowing DCIM data to be sent to other systems in industry standard formats such as CSV or XLS.

Organizing the Data Center

In This Chapter

- Looking at your DCIM platform

- Monitoring physical components

Data Center Infrastructure Management (DCIM) provides you with the opportunity to become extremely familiar with the physical assets of your data center. It allows you access to information about manufacturer's attributes as well as detailed deployment information such as physical resource requirements, installed location on the data center floor, maintenance records, organizational ownership, and warranty information. DCIM technologies keep track of this information, which often forms the basis for major business decisions. In addition to this fairly static information, a wealth of dynamic information about each asset (such as temperature) is available from dozens of supporting vendors, who add complementary technologies to the main DCIM platform.

In this chapter, I describe the DCIM platform itself as well as the instrumentation layer for the data center that supports the DCIM system.

The Platform's Architecture

In the early days of DCIM, people used software tools that were already available, adapting them to various aspects of data center organization. Tools such as Visio, AutoCAD, and Microsoft Excel were widely used to quickly create physical layer management solutions. The next series of data center management tools arrived in the mid-2000s and offered very basic feature sets, which were limited in scope and scaled to the most modest of data centers.

DCIM was still in its infancy and many of those early "DCIM" solutions were built by data center services consultants for their own use in the executions of their service contracts to their existing customers. Most of these early DCIM systems were never intended to be packaged and sold as commercial products. When they began to be offered in the marketplace, they were not broadly applicable but instead reflected their developers' specific experience and processes. Often running on a single PC or laptop, they worked well in single-user environments, but typically didn't scale well and only included features that the consultant felt were important for his or her own needs.

Since 2006, those early DCIM solutions have been steadily replaced by modern DCIM solutions, the most mature offerings taking the form of web-based products that are deployed across enterprise-class business servers. The web and all of its communications and presentation technologies have made possible the creation of complex data center management applications that can be easily scaled and widely accessed from anywhere on the Internet. Modern DCIM offerings typically scale by managing the data schema efficiently, using commercial high-performance database repositories, deploying distributed real-time collection engines, and through the use of separate business intelligence engines for analytics.

The Platform's Data Model

A core component of any DCIM suite is its data storage model. With large amounts of data coming in from a wide variety of sources, an efficient mechanism must be included in the DCIM suite that understands how to manage large amounts of diverse asset and real-time metric detail. A DCIM system's data model must be robust enough to store data in a way that is quickly retrievable in the context of complex analytics.

Early DCIM solutions relied on open-source SQL database management systems, such as the community-supported PostgreSQL. These database solutions work well for some kinds of applications, but not so well for high volumes of data that must be queried interactively. Commercial database management products handle that same type of SQL storage, but at data lookup rates that are dramatically faster than open source.

With diverse data coming in from different kinds of sources, normalization of the data is critical to avoid confusion and enable analytics. For example, if different sensor vendors are delivering metric data in different units, conversions will need to be made so that the DCIM solution has to deal with only one set of units. Furthermore, functional modules in a rack could be numbered either from the top to the bottom or the reverse. Once again, it doesn't matter which way you choose, but you have to choose one and standardize on it throughout the entire data center.

Of course, performance is always an issue when dealing with any computerized system. When a user is making a request of a DCIM system, he or she wants a response to come back right away. Nobody likes to sit in front of an unchanging screen waiting for a response. The way data is stored has a major impact on how quickly it can be retrieved. As a result, the amount of time that it will take to answer user requests has a major effect on the choice of a DCIM platform's data model.

The Platform's User Interface

Modern DCIM systems are web-based and operate from a common browser. The DCIM's graphical user interface (GUI) must present complex information in an easy-to-understand and easy-to-navigate fashion. The GUI is a key attribute of any DCIM solution, because customers will often base product adoption decisions on how intuitive the user interface is to the user. One well-known example of an intuitive GUI is Google Earth. A first-time user with no training can launch the program and then, using the intuitive widgets and the cursor, move to any desired place on the planet and zoom in down to street level. Google Earth serves as a proof of principle that intuitive access across vast data sets is not only possible, but easily accomplished by millions of users.

As with any complex application, the user interface is critical. It needs to connect a wide variety of users, operating at different levels, to an equally wide variety of data center assets. The requirements placed on a DCIM system's GUI go beyond those in effect in Google Earth. Whereas Google Earth is an example of one-way communication, with information traveling from the database to the user, the DCIM GUI is a two-way communication path. Not only does the user receive information from the DCIM database, but the user can actually effect changes in the system by moving assets or executing change management projects.

Instrumentation: Monitoring the Physical Components of a System

Modern data centers are complex systems, which means that a lot of data should be gathered regularly to provide the everchanging current status of the power chain, the cooling system, the performance of the servers, and the virtualization layers, as well as a host of other variables. All this monitoring is done by sensors and other measurement devices that are collectively called instrumentation. DCIM instrumentation includes a wide range of technologies and protocols, each designed to capture a specific kind of data that records some aspect of the status and health of the data center. The amount of data gathered by the instrumentation system can be impressive. In a data center with 100 racks holding 1,000 servers, tens of thousands of readings are recorded and must be stored every minute.

Temperature, humidity, and airflow sensors

In the early days of data centers, the first items that would later become integral parts of DCIM solutions were sensors for environmental variables such as temperature and humidity. Widespread adoption was a long time coming, because data center managers didn't see a great need for the information provided by these sensors. However, as data centers became larger and more complex, out-of-range values for any of these environmental variables somewhere out on the data center floor could lead to early failure of equipment and poor utilization of resources.

As the need for environmental monitoring at a detailed level became more obvious, the American Society of Heating, Refrigerating, and Air-Conditioning Engineers (ASHRAE) developed and published a set of guidelines for the data center environment. ASHRAE's recommendations on sensor placement have been incorporated into many DCIM systems. As a result, data center managers now have a fine-grained view of the key variables in the data center environment and can spot trends that need to be addressed.

Environmental systems may be either wired or unwired. Wired systems were the first to appear. Such devices are connected to LAN ports located throughout the data center. They use standard web and IP-enabled protocols. Because these devices are wired, cabling can be complex and costly and their adoption has been limited in scope.

Wireless environmental sensing systems bypass the complexity and cost of cabling of a wired system. There are two types of wireless systems: AC powered and battery powered. The AC-powered systems tend to use Wi-Fi (802.11), while batterypowered systems use some variation of Zigbee (802.15) or active RFID.

The latest generation of battery-powered wireless monitoring devices is a valid alternative to the AC-powered variety. Power limitations do limit the amount of data that can be transmitted per unit time, but clever algorithms can squeeze a lot of information into a relatively small number of bits transmitted. As a result of using these devices appropriately, battery lives in excess of three years have been claimed in some cases.

Power monitoring

All the computation being done by a data center requires a steady supply of reliable electric power. Each equipment rack may contain up to 40 active devices, each of which requires power, cooling, and connectivity. The number of racks in a data center may range from a small handful to well into the thousands. Regardless of a data center's size, all of its racks must be supplied with power. This power is delivered within a rack by a rack-based power delivery unit (PDU).

Data center managers today focus on maximizing the efficiency of power usage. Over the past 10 or 12 years, elaborate power distribution strategies have been developed to make the amount of power available at any location in the data center match the amount of power needed.

One optimization strategy is to move power more efficiently by using higher voltages, three-phase cabling, and higher current flows along shorter cable runs. Another is to monitor power usage at a fine-grained (per outlet) level in real time.

DCIM provides visibility of the data center's power chains, which allows timely decisions to be made that will keep loads balanced, assuring that enough power and cooling is always available where it is needed.

Providing information to outside applications

DCIM solutions have the capability to simultaneously provide operational data gathered from many sources for any given asset. Available are physical metrics such as power consumption, power supply status, operational status, internal fan speeds, and temperature readings from different places within the device. DCIM suites enable you to consolidate all of this logical and physical information together.

Connecting to the building management system

Buildings today have extensive building management systems that control power distribution, air conditioning, and other environmental control subsystems. These building management components fall under the expertise domain of facilities rather than under IT but form an essential source of metric detail for the DCIM deployment. The true promise of DCIM is to seamlessly join the world of IT to the world of facilities. By giving visibility of all the assets of both the IT and the facilities worlds, DCIM's value can be maximized. Just as an example, a power chain consists of many links, including each server's power supply, the in-rack PDU, the floor-mounted PDU, and the data center UPS, as well as the breaker panels and the backup generators. Each of these, some from the IT world and others from the facilities world, is a component of the total power distribution picture, and must be considered when making business decisions about the data center.

The building management systems hold a wealth of information and when integrated into a DCIM suite will enable easy monitoring of cooling resources as well as power and environmental factors.

Deriving Value from DCIM

In This Chapter

- Examining the value of DCIM

- Stepping through some DCIM processes

Comprehensive Data Center Infrastructure Management (DCIM) is a game changer for the data center, and it is available now. In the past, management of the physical aspects of the data center has been poorly understood. As a result, in order to ensure resource needs would be met, the standard solution was to overprovision everything. By supplying an abundance of resources, data center managers could be confident that they wouldn't run out of capacity. This worked, but as you can imagine, it was very expensive. When space and energy costs shot up in recent years, it no longer seemed like such a good idea to maintain substantially more capacity than was really needed.

What Does DCIM Mean to the C-Level Executive?

C-level executives, although each one has his or her own specific area of responsibility, share a concern for the impact of IT technology on the organization as a whole. They're concerned not only with the financial impact of computing but also with its agility and how it will affect their company's competitive situation, customers, employees, and shareholders, as well as the way the organization is perceived in the marketplace. DCIM helps with all of these concerns.

In addition to the C-level executives, people with a wide variety of roles will be affected by the introduction of DCIM into an organization. The interests of all stakeholders should be considered from the very beginning. It's a good idea to make a list.

Inefficiencies exposed translate into savings

The whole purpose of installing a DCIM system in a data center is to improve its efficiency of operation over time. It does so by exposing inefficiencies in a highly visible manner. Exposing inefficiencies is the first step toward correcting them. Improved efficiency translates into added value. Costs are reduced and the entire enterprise operates more smoothly. Savings due to efficiency improvements drop directly to the bottom line. In addition, improved efficiency makes everybody's job easier, but is particularly valuable to the C-level executives who are charged with the responsibility of directing all the major functional areas of the enterprise.

The data center is a core asset

The data center floor holds hundreds of millions of dollars' worth of physical assets, and billions of dollars' worth of information can pass through it. Your data center deserves to be carefully managed. The catalyst that has brought about the burgeoning adoption of DCIM is the rollup of costs due to the resources consumed and the streamlining of data center management. The data center can now be viewed as a single capital asset, an organization's most expensive asset with its own budget and expected return on investment.

A wide variety of products

With DCIM, physical layer resource management is now a reality. Everything that touches the physical layer is now referred to as DCIM. There are a handful of vendors with fullfeatured DCIM suites, each offering a comprehensive set of features that may include some form of asset life cycle management, with instrumentation and visual representations of the physical assets. Supporting these DCIM suites are other vendors that provide enhancement products that augment and round out the DCIM suites. Examples of the products are environmental sensors and intelligent power strips. And keep in mind the litany of other existing management systems that must also be integrated to maximize the value of a DCIM suite: service desk ticketing, building management software, and change management database repositories.

Entering into DCIM one step at a time

CFO, CIO, and even CEO executives are now searching for ways to proceed to the next phase in cost-effectively managing the data center. They view DCIM as the most promising opportunity to control their IT costs. A key part of DCIM's contribution is producing a coordinated IT and facilities costing and service delivery model. That integrated model is the basis of efficient, cost-effective operation.

When a data center reaches a certain size and level of complexity, the manager of the facility comes to realize that the tools that have been used so far for managing operations are no longer up to the task. The spreadsheets and floor plan documents that have served in the past, have become unwieldy as the data center has grown. It is at this point that managers start to look into the possibility of installing a DCIM solution.

It's natural to think that moving from spreadsheets to DCIM is a big step, and indeed it is. Some fear that installing a fullscale DCIM solution will require their company to rethink and retool the way the data center is managed. Such folks may also be concerned that their staff may not be up to dealing with a new technology that is radically different from the ad-hoc process that they are used to. Lastly, people just hate to leave the comfort of the familiar for the challenges of the unknown. Generally, DCIM is a good move that can create a more stable environment and a predictable fiscal model of computing. That said, DCIM can be adopted in phases. There is no need to make a giant leap into the future. Instead, the data center can take a small step into DCIM, and then when comfortable with that, take another small step, and continue until the full value of an enterpriselevel DCIM solution is realized.

Workflow and Process Optimization

After you have described your data center configuration within your DCIM suite, this critical information becomes the single highly trusted source of truth. It is now time to think about the ways you would like to change the processes you use in making physical changes to the production floor. Conceptually, you program these optimized new data center processes into your DCIM software. Your DCIM software starts to enable and enforce the new optimized processes.

In this phase, you can also start tracking usage rates over time. This historical data that you produce can then be used to improve forecasting. You can also start storing real-time data coming in from your sensors to your DCIM database, establishing a baseline for future forecasting.

Tech Refresh

Nothing lasts forever, and that is particularly true of IT equipment. As technology relentlessly follows Moore's Law, devices become ever smaller, faster, and more energy efficient. In many cases, what was the most efficient solution available a year or two ago is no longer competitive. Managers must continuously question whether the equipment currently out on the data center floor is making the best use of the resources it's consuming. In order to do that, managers need to know exactly what is on the data center floor and why it is there. They have to know who "owns" each piece of equipment and what its value is to the organizational business goals. Lastly, they need to assure that the value to the business of having a particular device on the floor is higher than the cost of having it there. When cost exceeds value, it should be replaced. This is the essence of technology refreshment. Technology refreshment should be a continual process as obsolescence overtakes one generation of equipment after another. In a highly optimized data center, technology refresh is a continual process that actually helps keep costs down.

Strategic Planning and IT Service Management Integration

After you've installed and shaken out your new DCIM system, you can move well beyond tactics into the realm of strategy. You can set up multiple what-if planning scenarios for new proposed projects, using your DCIM solution to do such things as identifying potential failure points in the power chain and using predictive analytics to optimize the use of power, cooling capacity, and floor space. You also have the ability to continuously audit devices in the data center and identify potential errors in either the DCIM or configuration management database. At this point, the chosen DCIM solution should be tightly integrated with existing IT service management and change management systems and with the change management databases. This enables a single point of understanding over all the important functions of the data center. With DCIM, the big picture becomes visible to all those who are responsible for any aspect of the way the data center affects the enterprise.

Ten Steps to a Successful DCIM Implementation

In This Chapter

- Seeking successful DCIM implementation

Data Center Infrastructure Management (DCIM) systems are comprehensive enterprise-class solutions that will likely touch a lot of people and processes in an organization. For the implementation of a new DCIM to be most successful, it'll take time and careful attention to a myriad of details. The best approach is to break down the total job into steps and perform those steps one step at a time, with each new step building on the previous one.

Do Your Research

There are a lot of stakeholders who have a variety of needs. Find out who they are and gain a good understanding of them. This should determine what your proposed DCIM system will deliver, rather than merely what is easy to deliver.

It pays to take advantage of the experience of others. There are bound to be organizations similar to yours that have already implemented DCIM systems. Talk to the people who were involved in those efforts. Think about how their experience relates to what you envision for your own data center.

Get Buy-In from All Stakeholders

For something as central to the life of an organization as its data center, a lot of people with a variety of roles have an interest in the operation and performance of that data center. In some cases, such as the IT, facilities, and finance organizations, that interest is evident. In others, such as the corporate responsibility team and many others, the connection might not be so obvious, but is equally important. For something as major and potentially disruptive to the status quo as the installation of a DCIM system to succeed requires that all those who will be affected understand in advance what the impact on them will be, and why this new system will be a net benefit to the organization as a whole.

It's important to know the needs and concerns of all the stakeholders and to make sure that the DCIM solution that is chosen will meet those needs and satisfy those concerns.

Set a Realistic Scope and Schedule

If you're considering deploying a DCIM system, you must have some idea of what you hope such a system will do for you. Some of these capabilities speak directly to your most important needs, others might fall into the "nice to have" category, and some may be of no interest to you at all. It is important to define the prioritized list of main goals you want to accomplish with a DCIM system, and then view the offerings of the various vendors with those goals firmly in mind. Don't be seduced by fancy features that you don't really need. Keep focused on your core requirements.

You also need to be realistic about the rollout schedule. Software projects are notorious for coming in late and over budget. Discuss timing with potential vendors. They have experience with how long it has taken their other customers to get up and running.

Document Existing Processes and Tools

Before you install a new system to maintain a data center and its operations, document what you have and how it's currently being maintained. An early step in the process is to fully document the data center assets and business processes that are currently in use. In many cases, current operations are poorly documented. Knowledge of critical operations resides in the brains of responsible employees and that knowledge tends to be transmitted verbally to new team members. Going through the process of documenting the existing assets and operational processes provides a big opportunity to optimize ongoing operations.

Determine How DCIM Will Integrate with Systems You Already Have

You may have a wide range of isolated change management systems, accounting systems, building management systems, power monitoring systems, and other systems that will interact with your new DCIM system. Remember, your DCIM system will become one of the most strategic systems in use across your entire computing environment, interfacing with everything else. Integration across all of these systems, facilitated by your DCIM suite, increases the reliability and timeliness of the data, helping to align your IT operations with your business needs.

Establish a Roster of Users

The future users of your new DCIM system should be made aware of its coming as soon as possible. Their inputs may be valuable in guiding the decisions to be made about system capabilities or priorities. In addition, the sooner they see that their world will be changing, the sooner they can acclimate themselves to the idea and be supporters, rather than resisters, of the change. The number of users and roles that can be dramatically affected by DCIM is staggering when it is deployed as a strategic approach rather than a tactical tool. In general, more users and their unique use cases is better.

Select a DCIM Vendor

Deciding on a vendor is the decision point that all of the previous steps have been leading up to. The offerings of the vendors you're considering will vary in a number of ways. Some of these variations will be more responsive to your needs than others. Additionally, you need to make an assessment of how mature each offering and vendor is.

It's good to see how long each vendor's solution has been available, and how many installations they have that are similar to your own. A large installed base instills more confidence than a small one. Vendors should be able to provide you with contact information for existing customers so that you can hear about the experiences of people who have already traveled down the path you're about to embark upon. Talk to people whose data center is similar in size and topography to yours. Make sure a proposed solution provider has experience with data centers of a size similar to yours.

A major consideration is how well each proposed solution will integrate with systems you already have in place. Your existing systems are already gathering data and performing operations. Steer away from engineering project type integrations, which are based on custom code rather than off-the-shelf integration products or connectors.

Avoid being dazzled by vendors' flashy demonstrations of simple tasks. Make sure the solutions they offer provide new levels of visibility and analysis for your world. Keep in mind that you're not looking for a prettier way of doing what you already do. You're looking for new business management insight that will enable you to make better decisions, and make them more quickly.

Because the DCIM field has many entrants, many products in the category are still developing and may provide a highly varied set of capabilities. Make sure you buy demonstrated capability, not the promise of a capability that will be available in a subsequent release. The software cemetery is full of vaporware that never quite made it to the status of real live commercially viable products.

Of course, price is always a consideration. Make sure there are no surprises and that prices are well defined. What is off the shelf and what does it cost? What custom work, if any, must be done and how will it be billed? Make sure these questions are answered definitively before you even start thinking of proceeding.

Implement Your DCIM System

At last it is time to actually deploy the software DCIM solution you have chosen. Everyone involved in the DCIM project should realize that the entire process could take several months in a very large data center, and remain patient throughout the entire process: The value to the organization will be well worth the wait.

Critically important, all of the information and processes from all the sources that have previously supported the data center must be transferred to the DCIM system, and then the original ways must be retired and eliminated as an alternative way of doing things. They have done their job, but from now on there must be only one DCIM-based go-to repository for all information about the data center. The new DCIM solution will now perform all functions previously performed by other tools, and hold all the information about the makeup and status of the data center.

Train Your Users

User training is critical. You could have the most powerful system in the world, but if your users don't know how to use it properly, the whole effort will have been wasted and they will look for ways around it. Different users will require knowledge of different aspects of the system, so training should

be customized to the needs of each individual. Enough time should be devoted to training to assure that each user not only knows how to use the system but is comfortable doing so. After getting over the hump of learning the system, users will look to the DCIM system as the easiest, quickest, and most efficient way to get the information that they need. To budget an amount of time for training, a good rule of thumb would be to allocate a week per small group who all perform the same job functions.

Celebrate!

Once your DCIM system is in production and things are running smoothly, take time out to recognize and reward all the people who have contributed to the success of this major effort. Include top management in the process. They should be made aware of what has been achieved and how it will boost the organization's effectiveness. Senior management has invested in this journey and it is critically important that you help them understand the positive effects it is having on the business.